Last week I launched the MVP of placecard.me and decided to spend up to $20 on Google Adwords in hopes of answering a simple question: does anybody actually want to use this thing?

After looking at the preliminary data I think I have an answer!

In this post I’ll quickly talk about how I setup and ran my test, what I’ve learned from the results, and what I plan to do next.

Defining the Test

I decided to validate placecard.me through a series of tests, each one with an increasingly high bar. That way I could determine at which stage my assumptions proved wrong, rather than delivering a fully-finished product and not knowing why people weren’t buying it.

For the first test, I wanted to learn two things:

- If the product was easy enough to use that people would be able to figure it out.

- If people felt they were getting enough value from the product that they’d be willing to give me their email address.

I figured if I couldn’t hit this bar then there was no way I could ever get anyone to pay my product—but if I could hit this bar then there was still a chance that my product could be successful.

As they say, “try to crush your dreams as fast as possible”.

Try to crush your dreams as fast as possible. sound entrepreneurial advice

Designing the Test into the MVP

Since I knew what I wanted to test, I could design the MVP specifically around these questions.

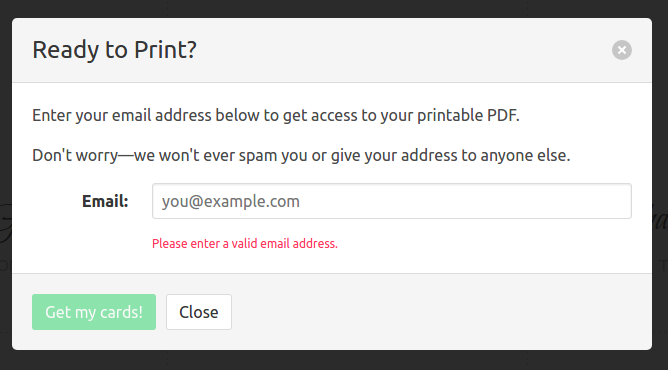

I set it up so that people could play with it as much as they want—they could fully create, customize, and preview their placecards on the site—but if they wanted to download the fully-finished PDF they needed to enter their email address.

By letting people explore the product freely I hoped to get better data about how user-friendly it was and also get them invested in the tool. Then, hopefully by the time they click “download” and get prompted for their email, they feel committed enough to provide it.

Hopefully, you only see this box after you’ve already finished making your cards and are then more inclined to provide your email.

Measurement

Of course, in order to run this test I needed to be able to measure what users were doing on the site. That’s where Google Analytics comes in.

I setup the MVP to track literally every click that you make on it. I can tell whether people click “try” from the landing page, and if they do, how far they get in the creation process, which template they chose, how they enter their guests, and most importantly whether they gave me their email and clicked the download link.

Google analytics makes it super easy to record all of these things as “events” and you can define certain events as “goals” to get all sorts of fun data and reports about conversions. This isn’t a post about how to use google analytics, but I will say that I feel I’m just scratching the surface of what it can do and it’s pretty amazing.

Getting Traffic

I decided to use Google Adwords to get my test traffic. I have heard anecdotally that Facebook ads may be more cost-effective, but in my case I wanted to make sure that the people who were arriving on my site were as likely as possible to want the thing I was offering, and I figured that people searching for things like “printable wedding place cards” were almost certainly the best candidates.

Google suggested a cost-per-click (CPC) of over a dollar for most of the keywords I wanted, but I’m cheap so I kept it to $.50. I don’t have any visibility into what that impacts, but it seems to be driving enough of the right traffic my way.

The Results

Ok onto the interesting stuff - the results!

I wanted to answer three questions with this test:

- Is the landing page converting?

- Are people able to use the product?

- Are people getting enough value from the product to give me their email?

I’ll look at these one by one.

Is the landing page converting?

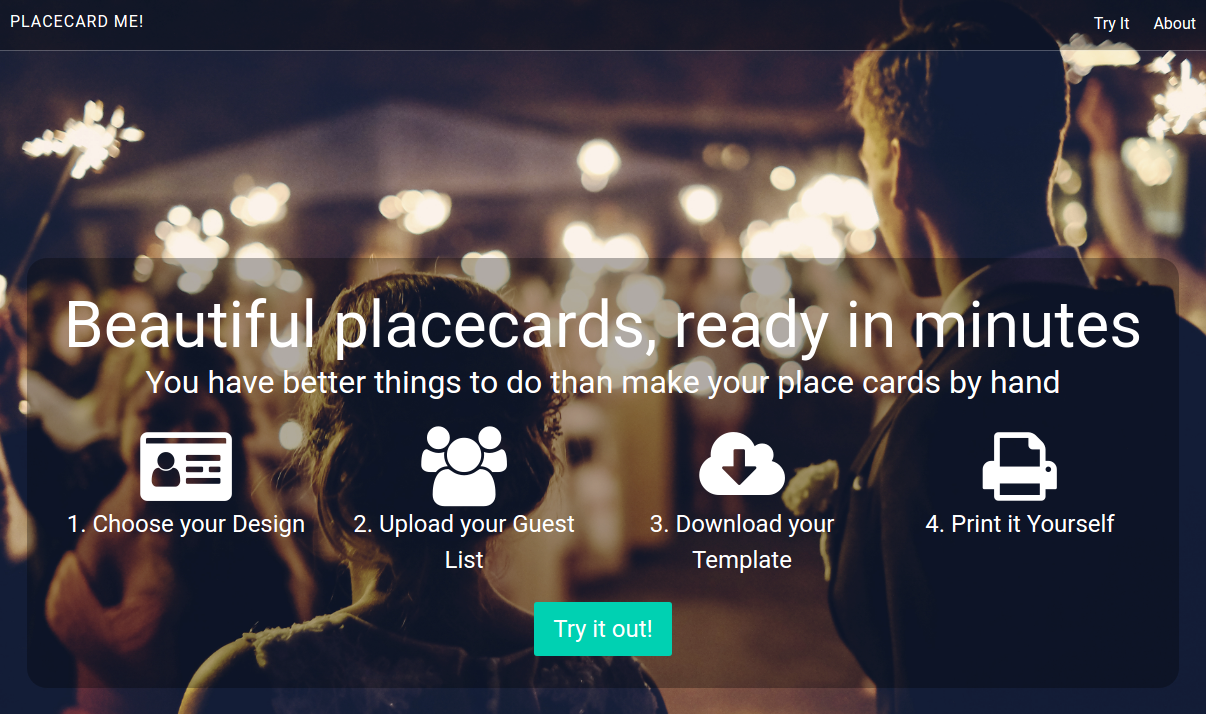

So placecard.me has a landing page where people can get quickly oriented about what the product is, and then it has the placecard maker which is what I want to get people to use. I did it this way because I was worried about disorienting people by dropping them right into a possibly-complicated placecard making tool before they understood what they were looking at.

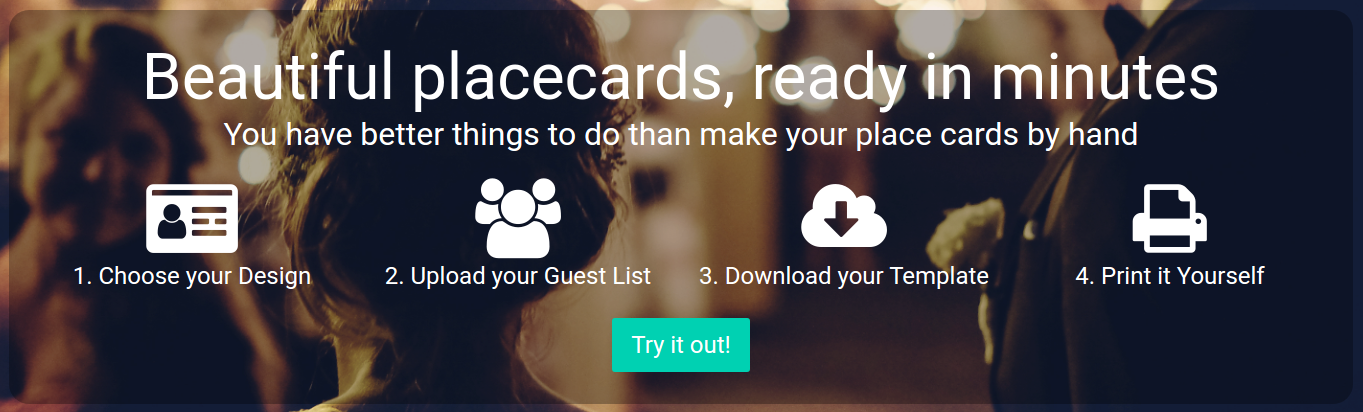

To try and get people to try the card maker, I put a giant “Try it Out” button on the landing page, which you can see here:

You want to click that button, don’t you?

So were people clicking through?

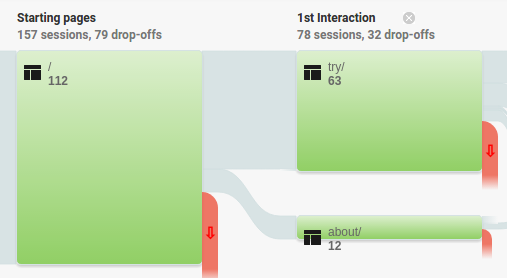

Let’s take a look:

So here we can see the 112 sessions that hit the landing page last week. Of those 112, that red waterfall-looking thing is the 38 people (33%) who dropped off—left the site without doing anything else. Of the remaining ones, 62 people (about 55%) did try it out, and another 12 (11%) went to the about page from the link in the header. Of those people who went to the about page, 3 of them then went on to try it out.

To summarize: 65 of the 112 visitors, or about 58% of them, are trying the product after visiting the landing page.

Not bad!

The numbers above actually represent the behavior of all traffic on the site, which includes friends and blog readers. If we limit this analysis to just ad-driven traffic, the conversion rate actually increases to 73% (although, admittedly, the sample size is very small).

Answer: the landing page converts well (enough).

Are people able to use the product?

So the landing page converts! Unfortunately, that doesn’t really matter if people can’t figure out how to use the product. So let’s see if we can figure that out.

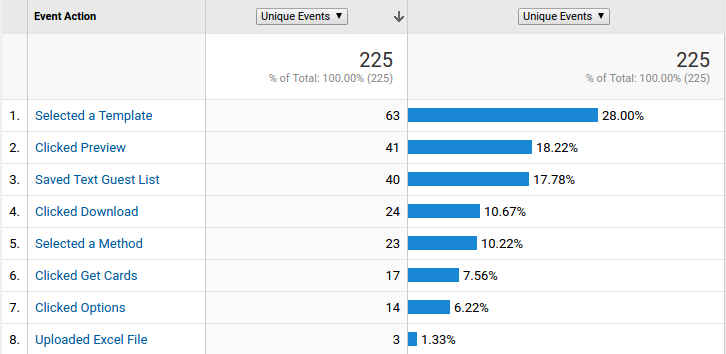

As I mentioned, I instrumented basically every action someone takes in the card maker, so it’s pretty easy to try and guess at this based on looking at this data:

Google analytics makes it really easy to see were events were triggered in each user’s session

Unfortunately, I’m not positive what the denominator of total people who visited this page is, though I’d like to hope it’s the 65 number from above.

Regardless, we can see that of the 63 people who select a template, 41 (65%) of them get to the point where they preview something.

I’m going to assume that anyone who didn’t select a template never really attempted to make cards. So from this data I think I can say confidently that at least well over half the people who tried it out where able to successfully use it.

Answer: the product is usable (enough).

Is the product valuable?

So the product is usable! However, that doesn’t really matter if it’s not providing real value for someone’s problem. As I mentioned already, I decided to test the creation of value by seeing if people would be willing to provide their email address to download their cards.

For this analysis I decided to look at ad-traffic only, since I figured anyone who is finding the site through my blog is probably not actually representative of real user behavior.

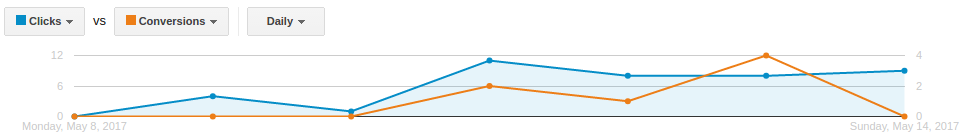

I created a “got email address” conversion in Google adwords and linked it to the corresponding event in Google Analytics.

Then I can see how many clicks and conversions I’m getting from my ads.

The number I’m looking for here is the conversion rate, which is 7 conversions (email addresses collected) out of 41 clicks or about 17%. What this means is that about one in six people who clicked on my ads actually made placecards and gave me their email address.

This isn’t a huge number, but I was actually pretty happy with it. If my product is already good enough that one sixth of people are putting in their emails to get placecards that seems to indicate that it might be solving a real problem for people!

As an aside—less motivating is the number next to that one: $2.45. That’s how much one email address is costing me on Google Ads right now. If I ever want to use ads as a customer acquisition channel to make money it’s going to be an uphill battle.

Answer: the product is valuable (enough).

Is any of this statistically significant?

So I currently have a sample size of 41 people. Can I really say that my email conversion rate is 17%?

Answer: absolutely not!

Forty-one is a tiny sample size, and to trust that number I’d need way more people using the site.

But the important thing is that it doesn’t matter. The point of this test was not to determine the precise conversion rate of my application, it was to determine whether there is any viability in this product.

From that perspective, 7 out of 41 is totally enough information to say “maybe”, which was all I needed to move on to the next test.

What’s next?

Overall, I’m quite pleased with the results of my first test. Not only was I able to answer all of my questions with data, but all of the answers came back positive (enough).

I had the fear going into this week that no one would want to use the card maker and this would be the end of placecard.me’s entrepreneurial journey.

Looks like this ship is gonna sail on to the next port!

The next test I need to run is figuring out whether I can make the leap from email address to dollar bills. I’ll be writing about that in the future…once I figure it out.

Stay tuned.